What if… AI accelerates science?

5 min

Increasingly intelligent AI has the potential to boost productivity across many sectors. However, time savings are only one way in which this technology could transform our lives. Could AI soon trigger a wave of scientific discoveries? If so, the way scientific results are communicated may need to change. This would have both advantages and disadvantages.

"If it does not help to do a thing once, it will not help to do it twice… or ever. "

Paul Samuelson, 1979

This is not exactly the kind of sentence you would expect to see in an economics paper. Yet this is precisely how Paul Samuelson expressed his views on optimal investment behaviour. Over ten years after receiving the Nobel Prize, Samuelson became embroiled in a bitter debate with Harry Markowitz, among others. What was at stake? The Kelly criterion. This is a method for determining what proportion of your wealth you should allocate to an uncertain investment in order to maximise returns.

However, the Kelly criterion requires enormous risk tolerance, since a large proportion of one’s wealth is invested and can therefore also be lost. Samuelson strongly rejected this idea, demonstrating mathematically that not everyone can tolerate that level of risk.

Samuelson, with a knock-out

But even more powerful was his 1979 paper, 'Why we should not make mean log of wealth, big though years to act are long'. In it, he revisits his argument, using only words of one syllable. His message to his counterparts was clear: 'I use simple language... because you are fools.'

Score: Samuelson won by knockout... at least within the academic world. However, major investors (and gamblers) later made eager use of the criterion and related ideas.

It is doubtful whether AI would interpret Samuelson's text in the same way as his colleagues did at the time. They could place the paper within the literature, but more importantly, they understood the context of the debate and the power of Samuelson’s message. While this is an exceptional document, the issue arises more often: how can we ensure that AI understands scientific papers?

A data centre full of geniuses… and referees

A number of researchers developed a piece of software, OpenEval. They did so by comparing thousands of first versions of papers with the comments provided by so-called referees. These referees act as gatekeepers through whom researchers must pass before their work can be published in scientific journals.

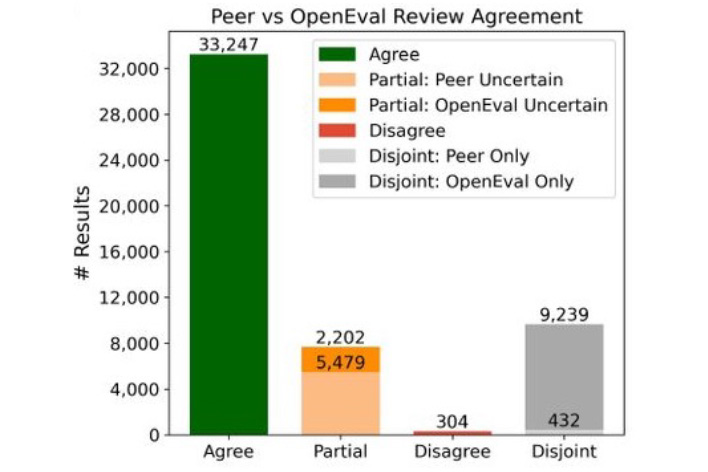

The researchers used LLMs to extract claims from draft papers. These claims essentially represent the research findings embedded in various forms (e.g. graphs, tables and continuous text) within the documents. They then had OpenEval evaluate the results. It transpired that 80% of the examined results were evaluated in the same way by the software as by the referees. In fewer than 1% of cases, the evaluation differed significantly.

This is no surprise. AI is becoming increasingly useful for scientific research. Recently, the outspoken economist John Cochrane even praised 'Refine'. According to Cochrane, the comments that the tool provided on his work were “the best he has received in his entire career”, and he is no novice in the field.

The authors therefore conclude that AI-driven evaluation is highly promising. Currently, referees are often either unpaid or underpaid professors who provide feedback on new submissions within their field. This process can be slow and very frustrating for inexperienced researchers. They often have to rewrite their research in different ways to obtain approval from the referees.

AI could therefore significantly speed up this process. But is the traditional paper still the most suitable format for conducting science?

A new standard then?

The developers of OpenEval point out that extracting claims from published work requires a lot of computing power from LLMs and is not without errors. Rather than translating the results afterwards, they advocate a different format for research results at source. They recommend making academic work directly 'machine-readable'. In other words, they are calling for a new standard for academic papers.

This would offer several clear advantages: a database of claims would drastically reduce the cost of identifying which hypotheses have already been studied. Claims in such a database could also be directly linked to the methods used and the underlying data, thus increasing their credibility.

However, if scientific work is communicated solely through lists of claims, there is a major drawback.

Samuelson’s text did more than simply present the results of his work. The format he chose, using very simple words, played a significant role in how scientists later engaged with his ideas. This is an important insight.

Today, scientific documents are used for more than just conveying claims. They often tell a specific story about the research conducted, the reasons behind it, and the relevance of the results. This story helps determine how and whether future research will be carried out.

For example, it was the famous 'two Jans' paper on market power and its economic implications that led me to specialise in Industrial Organisation during my research master's degree. Like all carriers of information, papers compete with one another for the scarce attention of consumers, readers and potential research collaborators. In that sense, Samuelson was actually doing marketing.

The only constant is change

What is happening to the academic paper today is essentially nothing more than the well-known Silicon Valley motto: “unbundle, rebundle, repeat”.

Until recently, papers ensured that research results were shared in a way that allowed referees to evaluate them and researchers to use them and be motivated to build on them. In order to better harness the potential of AI, it could be useful to separate these functions. This could be achieved by introducing a new standard, as the OpenEval developers suggest. However, this is far from straightforward.

Academic publishing is a highly closed system. A handful of publishers dominate the market. They charge research institutions large sums for access to scientific research. This system attracts considerable criticism from users, who often provide the product free of charge, or sometimes even at a cost, and are then charged for access to it.

Some are working hard to change this. For example, by developing open-access publishing platforms such as Arxiv. But also by breaking the rules, like the scientific pirate queen Alexandra Asanovna Elbakyan.

The final word goes to AI itself. Consensus already makes researchers’ work easier today. It processes millions of papers to provide a well-founded answer to a simple yes/no question.

The question “Does scientific research speed up when AI can read papers more easily?” receives a classic economic answer from Consensus: “Incentives matter.”

This economist could not have summarised it better.